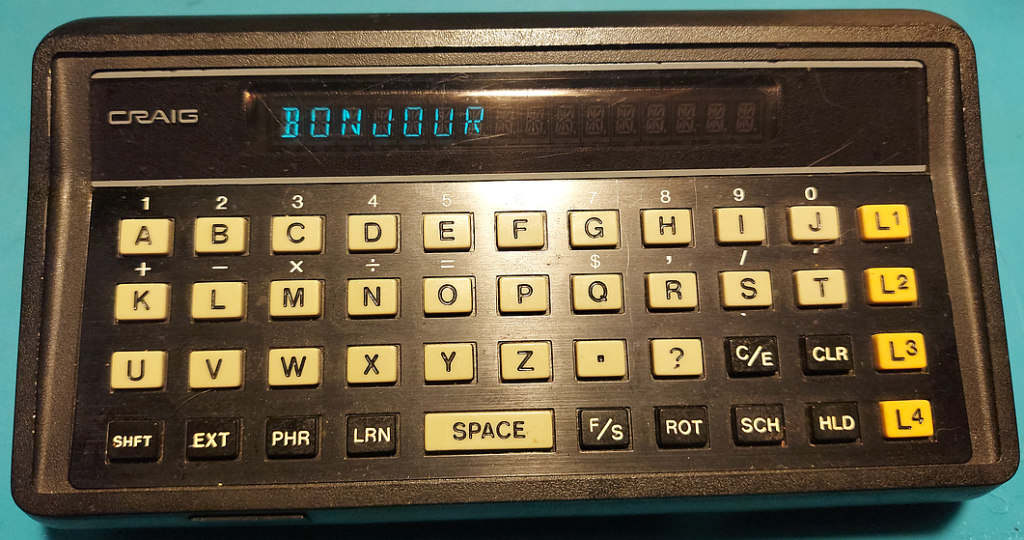

The Craig M100 was a cool little 80s-era gizmo that could translate basic tourist phrases among three selected languages and act as a basic language coach, showing word and phrase pairs to refresh your memory.

It also came with a calculator function — and from working with it, I get the impression that this was done 100% in software because someone in Management thought it was a good idea. It’s adequate for splitting a restaurant bill — barely — but you might beat it with pencil and paper, and a competent abacus user could wipe the floor with it.

There were relatively inexpensive electronic calculators available when the Craig M100 was produced, and they had no such speed problems, doing all four basic arithmetic operations in a fraction of a second. Without opening the M100 up to look, my guess is that, for economical reasons, Craig’s engineers used a very simple microcontroller, since its intended use was basically to display words and phrases from a stock ROM. The most they probably envisioned it doing was the occasional word search (which in an array, you can do by binary search readily enough.)

But floating-point operations, especially division, are trickier. Most low-power microcontrollers see the world in bytes, which are interpreted as integers from 0 to 255, inclusive. Getting these simple symbols to handle not only floating-point math but base-10 is nontrivial (if you’re not in the year 2023 when every programming language out there worth anything has libraries for this sort of thing.)

Check out the video of the Craig performing division, at a pace not seen since calculations were done with gears and levers. This isn’t a bug — it’s working as it was designed!

My current video card — a cheap-and-cheerful RTX2060 — has a theoretical top calculation speed of 12.9TFLOPS (12.9 trillion floating-point operations per second). The M100 takes something like eight or nine seconds to do one division, making it something like a hundred and twelve trillion times slower! Yeah, it’s from 1979, but still.

Managers, if your engineers tell you something doesn’t make sense — please listen.