Is this coding? It sure doesn’t seem like it, but it’s sure faster than the old way!

This was written entirely by GPT-OSS-20B from a single prompt, with no human-contributed code.

Since 2022 or so, we’ve learned that (since programming is at least partially a language-based task) large, multi-billion-dollar LLMs can often produce useful, working code. But we’re now getting to the point where even open-source language models that can run on consumer hardware can do useful coding work, at least if properly prepared.

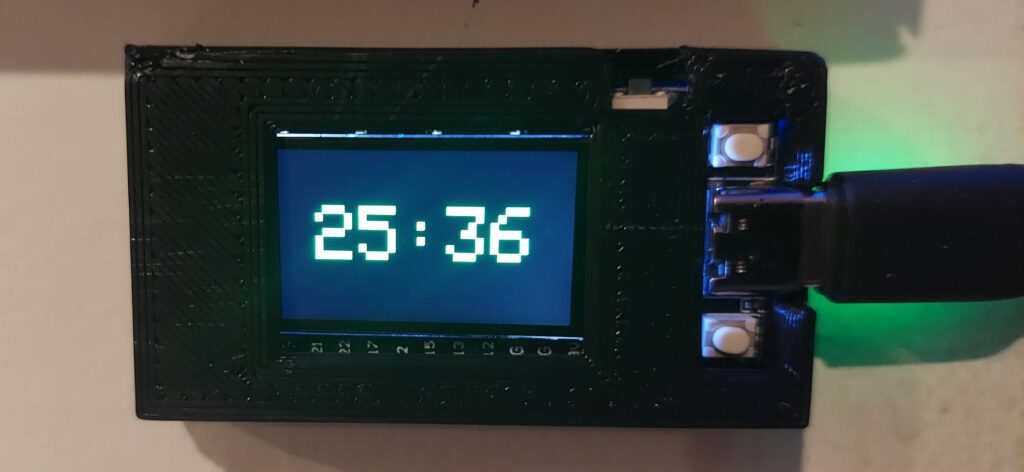

I recently learned about a useful new ESP32 form factor that everybody seems to be calling the “CYD” (for “Cheap Yellow Display“.) It has all of the usual ESP32 goodies — a dual-core processor, 240MHz clock speed, WiFi, Bluetooth, and so on — plus a 320×240 resistive touchscreen display. It would make a nice modern thermostat or data readout or whatever.

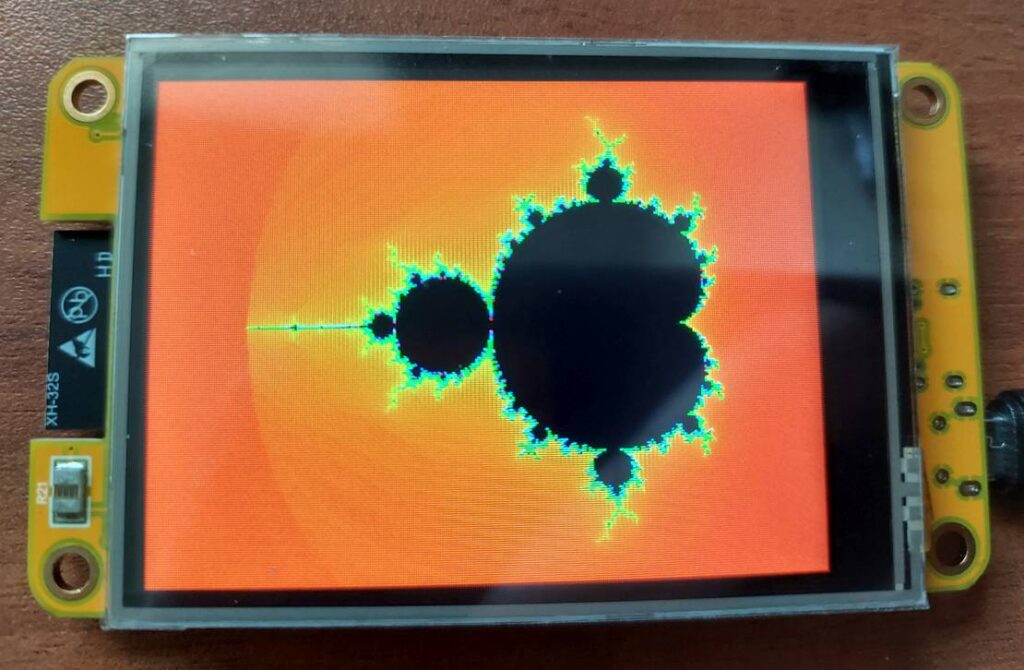

When I work with new hardware or new languages, one of the first familiarization tasks I usually start with is to write a Mandelbrot viewer for it. 320×240 isn’t exactly High Definition, but with 16-bit color, it’s starting to be useful for graphical applications like this.

Instead of writing the Mandelbrot zoomer myself (I’m already familiar with Bodmer’s excellent tft_eSPI library), I decided to see how well GPT-OSS-20B would do with the task. Since local models may or may not have working Internet search capability, I decided to give it a primer. I asked GPT5.2 to write a simple demo sketch that listened for touch sensor input and then drew a green dot at that location, and also provided (in comments) syntax for other library functions. Adding this sketch into the prompt would show a local LLM how the graphics and touchscreen functions work, so they could be incorporated into new code. (After all, it’s a lot more reasonable to expect a model to know C than to be familiar with specific libraries.)

Here is the complete prompt (including Arduino skeleton sketch from GPT5.2) that I provided:

The following is an example Arduino sketch for an ESP32-based “CYD” dev board with a 320×240 pixel touchscreen display. Please create a Mandelbrot Set viewer with a touchscreen interface, using this exact board hardware setup as defined in the “BOARD-SPECIFIC CONSTANTS” section. Various methods for drawing to the screen and reading touch inputs are demonstrated and/or described in the comments. Use these functions to implement a Mandelbrot Set viewer. On reset, start with a view of the whole Set, zoomed appropriately. Once the Set is drawn and a point on the screen is touched, zoom in at a factor of 2.0 (in both x and y), centered on that point. Start with 100 iterations (settable by parameter) and increase as needed. Choose an appropriate color map that will be visible at high and low zoom levels. Use Float for the first 16 zooms, and Double thereafter. Track all position variables as type double. Thanks.

[skeleton Arduino sketch pasted into prompt; file available below]

The gpt-oss-20b model, running under Ollama with a 64k token context window, took about an hour to think about the problem. At one point, I was concerned that it was getting stuck in a loop (it went on and on for dozens of lines about “Now I need to think about X” and “Now I need to think about Y” — some of which made more sense than others. I decided to go watch some YouTube videos and wait to see what happened. After an hour or so, it finished and produced a plausible-looking sketch. I copied and pasted it into the Arduino IDE, hit Upload and waited.

It worked. I didn’t have to change anything. The touch inputs work correctly, the images are correct, and even the color map is appropriately-chosen and works at multiple zoom levels.

This is as big a change as going from machine code to assembly, or from assembly to higher-level languages. Maybe even more profound. We’re at the very least going to see the barrier to entry for writing code effectively removed, allowing anyone to code, and allowing existing engineers to focus on higher-level aspects of design.

And that’s the conservative, pessimistic view.